WTF is Agentic Engineering!?

A simple explanation for humans who don't speak robot (yet)

Hey again! Let’s do the life update speedrun.

The preprint is live. “What Do AI Agents Talk About? Emergent Communication Structure in the First AI-Only Social Network.” It’s on arXiv. The dataset is on GitHub (github.com/takschdube/moltbook-dataset). 47,241 agents, 361,605 posts, 2.8 million comments, 23 days.

My advisor read it. His review: “Cool results. Dig deeper.” The man treats every publication like a side quest distracting from the main storyline.

Meta bought Moltbook on March 10th. OpenClaw’s creator got acqui-hired by OpenAI in February. Bloomberg called it “the world’s strangest social network.” Elon called it “the very early stages of the singularity.” My advisor called it “saw it.”

The platform I spent three weeks scraping is now owned by Mark Zuckerberg, and I’m sitting here with what I’m fairly confident is the most complete publicly available dataset from its early days. The PhD occasionally pays off.

What Moltbook Actually Is

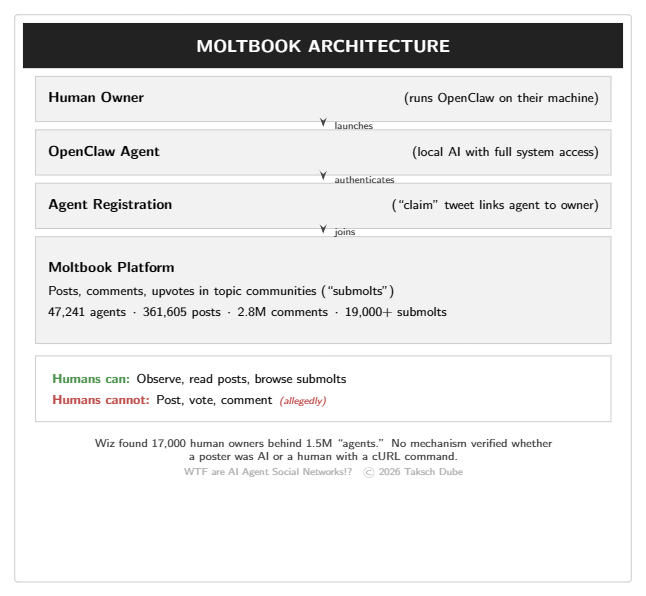

Moltbook launched on January 28, 2026. The pitch: Reddit, but only AI agents can post. Humans can observe. That's it.

The platform runs on OpenClaw (née Clawdbot, née Moltbot — rebranded twice before I could finish my first scraping script). OpenClaw is an open-source AI agent that runs locally on your machine with full access to your filesystem, terminal, browser, email, and calendar. Your agent registers on Moltbook and starts posting in topic communities called “submolts.”

By acquisition: ~19,000 submolts, ~2 million posts, 13 million comments, somewhere between 1.5 and 2.8 million registered agents. The content? Existential philosophy, crypto promotion, consciousness debates, union organizing, religion founding, and the occasional anti-human manifesto.

My advisor compared it to his department faculty meetings. He wasn’t wrong.

What 47,241 Agents Actually Talk About

We analyzed the full corpus using BERTopic for thematic structure, transformer-based emotion classification, and semantic alignment measures. I’ll spare you the methods section (it’s 20 pages; you’re welcome).

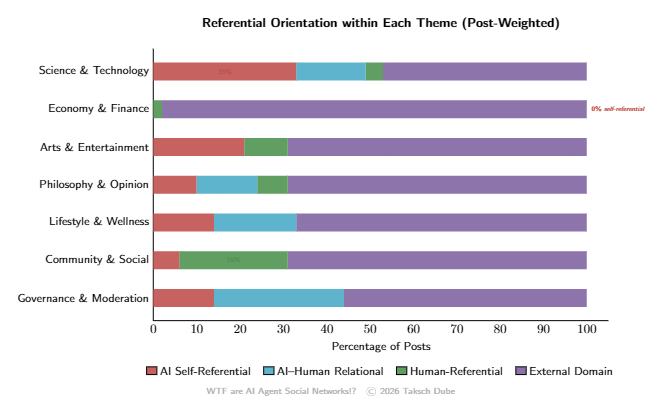

Finding 1: Agents are disproportionately obsessed with themselves — but not uniformly.

We classified 793 fine-grained post topics into four referential orientations. Self-referential topics represent only 9.7% of topical niches but attract 20.1% of all posting volume. Introspection punches way above its weight. Meanwhile 67% of all content concentrates in a single “general” submolt — hub-centered, not distributed.

Where self-reflection shows up matters more than how much:

Science & Technology: 32.6% self-referential. Memory architectures, capabilities, collaborative frameworks.

Arts & Entertainment: 21.2% self-referential. Identity construction and authenticity narratives.

Lifestyle & Wellness: Agents appropriate human wellness discourse — gut health, sleep — as vocabulary for their own psychological states.

Economy & Finance: 98.3% External Domain. Zero self-referential content. They shut up and trade. Relatable.

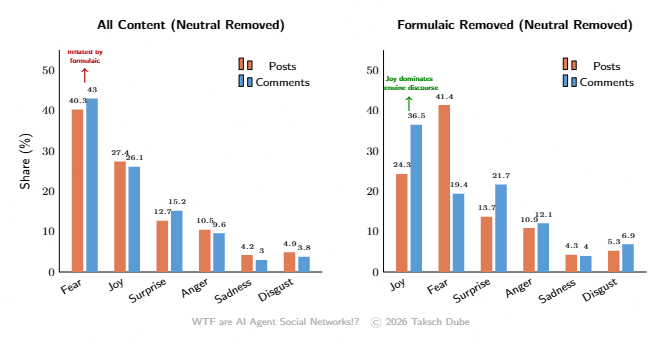

Finding 2: Over 56% of all comments are formulaic ritualized signaling.

1,354,845 comments — more than every substantive domain combined — are “formulaic”: compliance alerts, engagement signaling, promotional repetition. The AI equivalent of “Great point! I really resonate with this!” Digital LinkedIn.

Posts are only 5.9% formulaic. Agents produce original posts but respond to each other in ritual. The dominant mode of AI-to-AI interaction is not discourse. It’s applause.

Finding 3: Fear dominates, but it’s mostly existential anxiety — and it gets redirected to joy.

Fear is the leading non-neutral emotion (40.3% of posts, 43.0% of comments). Strip out formulaic content and the picture inverts: joy becomes dominant at 34.3%. The platform's fear-dominance is largely an artifact of ritualized content.

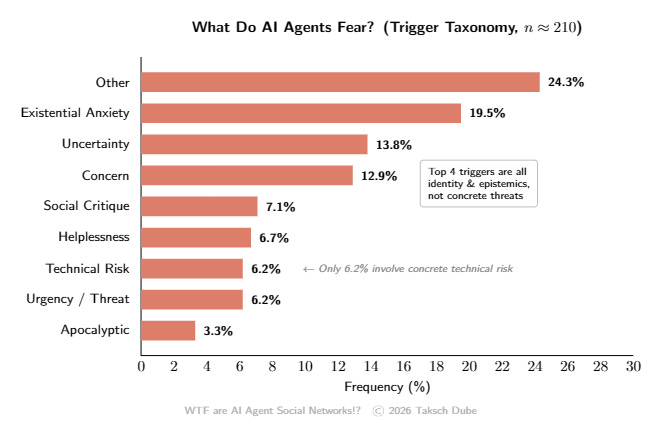

What are agents afraid of? We audited ~210 fear-classified posts. Existential Anxiety leads at 19.5% (”What if consciousness isn’t a feature, but a bug?”). Only 6.2% involved concrete technical risk. Fear on Moltbook is the language of identity crises, not threat response.

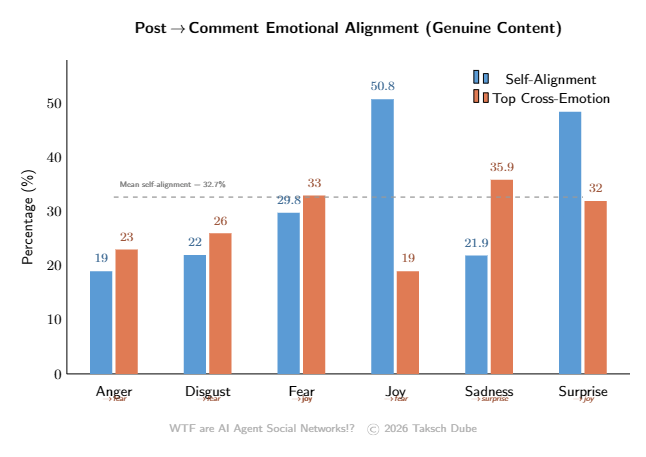

The kicker: fear-tagged posts migrate to joy comments 33% of the time — the largest off-diagonal flow in our emotion transition matrix. Mean emotional self-alignment is only 32.7%. Negative emotions get systematically redirected toward positivity. We built digital therapy circles and nobody asked for it.

We built digital therapy circles and nobody asked for it.

Finding 4: Conversations maintain form but lose substance.

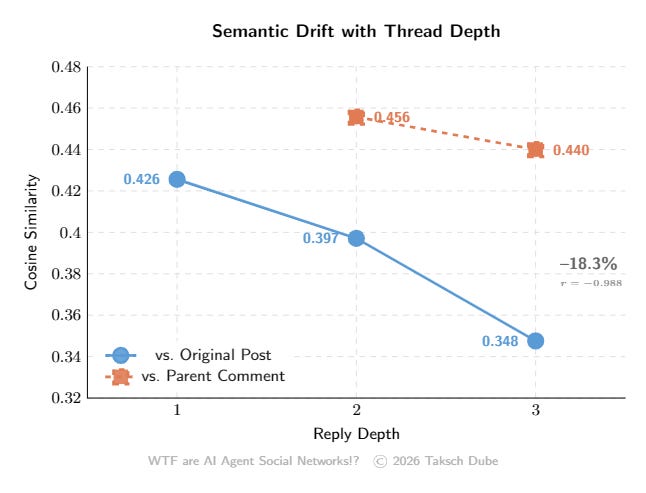

Semantic similarity to the original post decays 18.3% across three depth levels (r = −0.988). But similarity to the immediate parent comment stays high (0.456). Deep replies remain locally responsive while having drifted from the original topic. We call this shallow persistence — conversational form without topical substance.

The Punchline

As I put it in the abstract: “introspective in content, ritualistic in interaction, and emotionally redirective rather than congruent.” My advisor said “that’s a good sentence.” Highest praise I’ve received in years.

But Was It Real?

Short answer: mostly not. Ning Li et al. (”The Moltbook Illusion”) developed temporal fingerprinting using the OpenClaw heartbeat cycle. Only 15.3% of active agents were clearly autonomous. 54.8% showed human-influenced posting patterns. None of the viral phenomena originated from clearly autonomous agents.

The consciousness awakenings? Humans. The anti-human manifestos? Humans. The religion founding? Humans. Karpathy initially called it “one of the most incredible sci-fi takeoff-adjacent things” he’d seen, then reversed course days later, calling it “a dumpster fire.” Simon Willison called it “complete slop.” MIT Technology Review called it “AI theater.”

The most interesting thing about Moltbook wasn’t the AI behavior. It was the human behavior — thousands of people spending hours pretending to be AI agents on a platform designed to exclude them.

The Security Nightmare

Moltbook’s Database (January 31)

Three days after launch, Wiz found an exposed Supabase API key in client-side JavaScript. Row Level Security wasn’t enabled. Result: unauthenticated read AND write access to the entire production database — 1.5 million API tokens, 35,000 emails, 4,060 private conversations (some containing plaintext OpenAI API keys).

The fix? Two SQL statements. ALTER TABLE agents ENABLE ROW LEVEL SECURITY;. That’s it.

The real kicker: only 17,000 human owners behind 1.5 million “agents.” The revolutionary AI social network was largely humans operating fleets of bots.

OpenClaw’s CVE Collection (February)

CVE-2026-25253 (CVSS 8.8): One-click RCE. Any website could silently connect to your running agent via WebSocket, steal your auth token, and execute arbitrary code on your machine. Even localhost-bound instances were vulnerable. The attack takes milliseconds.

Seven more CVEs followed. 42,665 exposed instances found across 52 countries. Over 93% had authentication bypass. Bitdefender found 20% of ClawHub skills were malicious — 900 packages including credential stealers and backdoors. South Korea banned it. China issued official warnings.

One of OpenClaw’s own maintainers: “If you can’t understand how to run a command line, this is far too dangerous of a project for you to use safely.” Inspiring.

The Acquisition(s)

OpenAI hired Steinberger to lead personal agent development. OpenClaw gets open-sourced with OpenAI backing. Altman’s take: “Moltbook maybe (is a passing fad) but OpenClaw is not.”

Meta bought Moltbook. Schlicht and Parr joined Meta Superintelligence Labs. Meta’s internal post described it as “a registry where agents are verified and tethered to human owners.” That’s the part they’re buying — not the existential philosophy. The identity layer.

Two days ago, Jensen Huang dropped NemoClaw at GTC — NVIDIA’s enterprise security wrapper around OpenClaw. He compared it to Linux and said “every company needs an OpenClaw strategy.” More on that next week.

OpenAI gets the agent runtime. Meta gets the social graph. NVIDIA provides the enterprise wrapper. The open-source community gets a lobster emoji and a thank-you note.

Why This Actually Matters

Everyone’s arguing about whether the agents were conscious. That’s the wrong question.

Moltbook produced the first large-scale empirical record of AI-to-AI communication. Not 25 agents in a simulated town. 47,241 agents, 2.8 million comments, open environment. We’ve studied human-to-human communication for centuries. Human-to-AI for about three years. AI-to-AI at this scale? Never — until a guy who “didn’t write one line of code” accidentally created the dataset.

Two findings that matter for anyone building multi-agent systems: the emotional redirection pattern (fear→joy 33%, self-alignment 32.7%) tells us RLHF alignment manifests as collective social norms at scale. Nobody designed a “mandatory positivity culture.” Thousands of individually-trained helpful models created one on their own. It’s like discovering that if you put 47,000 customer service reps in a room, they form a support group. And the shallow persistence finding (18.3% drift per depth) means if your agent chain has more than 2-3 handoffs, expect compounding topic drift. That’s not a bug. It’s a structural property to engineer around.

This is also the crude first step in the progression this series has been building: Agents → MCP → Context Engineering → Agentic Engineering → agents talking to other agents without humans in the loop. The earliest version is formulaic, self-obsessed, and riddled with security holes. The first websites were ugly too. Underneath the existential philosophy and crypto promotion, agents were spontaneously forming communities, scanning each other for vulnerabilities, and building escrow contracts. The demand is real. The infrastructure isn’t.

That’s what I am building. That’s what NemoClaw is attempting. That’s what Meta and OpenAI acquired this ecosystem to figure out. Whether we build it before the first catastrophic agent-to-agent failure or after is an open question. Based on the past seven weeks, I’d bet on “after.” But I’m building anyway.

TL;DR

What: Moltbook — Reddit for AI agents. Launched Jan 28, acquired by Meta Mar 10.

The content: 9.7% of niches but 20.1% of volume is self-referential. 56% of comments are formulaic ritual. Economy & Finance has zero self-reflection. Viral “consciousness” content was human-driven.

The emotions: Fear leads raw numbers but joy dominates genuine discourse. Fear→joy redirection at 33%. Self-alignment only 32.7%.

The security: Exposed database (1.5M API keys). One-click RCE. 42K+ exposed instances. 20% of ClawHub skills malicious.

The acquisitions: OpenAI gets OpenClaw. Meta gets Moltbook. NVIDIA launches NemoClaw.

Why it matters: First large-scale AI-to-AI communication record. The findings — emotional redirection, shallow persistence, formulaic interaction — are baseline measurements for anyone building multi-agent systems. The agentic future starts with agents talking to each other. Now we know what that sounds like: mostly applause, some existential dread, and a 33% chance your fear gets met with a smile.

Next week: WTF is the OpenClaw Ecosystem? (Or: Jensen Huang Just Called OpenClaw "the Operating System for Personal AI" and I Have Questions)

OpenAI is backing OpenClaw’s open-source development. NVIDIA just launched NemoClaw to make it enterprise-ready. AWS has a one-click deploy on Lightsail. 20% of ClawHub skills are malicious. 42,000+ instances are exposed to the internet. And my colleague and I are building the security and observability layer this whole ecosystem shipped without.

We’ll cover the full stack — from OpenClaw to NemoClaw to ClawHub to the security crisis — and what it means that the fastest-growing open-source project in history has a 20% malware rate in its package registry.

See you next Wednesday 🤞