WTF is Agentic Engineering!?

A simple explanation for humans who don't speak robot (yet)

Hey again!

Life update: I have a preprint. An actual, real, on-arXiv preprint. What Do AI Agents Talk About? Emergent Communication Structure in the First AI-Only Social Network. I released the dataset too: github.com/takschdube/moltbook-dataset. My mom asked if this means I'm graduating soon. I changed the subject.

We analyzed Moltbook — the first AI-only social network — where 47,241 agents generated 361,605 posts and 2.8 million comments over 23 days. No humans. Just agents talking to each other. The short version: they're disproportionately obsessed with their own existence, over half their comments are formulaic platitudes, and they respond to fear by redirecting it into forced optimism. We built digital therapy circles and nobody asked for it. More on the findings next week.

Oh, and then Meta acquired Moltbook. Yesterday. While I was writing this post. The founders are joining Meta Superintelligence Labs. OpenClaw's creator got acqui-hired by OpenAI. Elon Musk called it "the very early stages of singularity." Bloomberg called it "the world's strangest social network." My advisor called it "saw it." Two words. I'll take it.

Full Moltbook deep-dive next week — I have the data, I have the paper, and the platform is now owned by Mark Zuckerberg, so there's a lot to unpack. But this week: the topic that ties all of it together. The guy who invented "vibe coding" just killed it.

The One-Year Anniversary Burial

On February 4, 2026, almost exactly one year after coining the term “vibe coding,” Andrej Karpathy posted on X that the concept is passé. The same man who told us to “give in to the vibes, embrace exponentials, and forget that the code even exists” now says the industry has moved beyond vibes.

His replacement term: agentic engineering.

His definition: “’agentic’ because the new default is that you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight — ‘engineering’ to emphasize that there is an art & science and expertise to it.”

Not everyone loves the rebrand. Gene Kim, author of an actual book called Vibe Coding, told The New Stack that vibe coding is the term that sticks — “the genie is out of the bottle.” Addy Osmani (Google’s engineering director) preferred “AI-assisted engineering” for a while before conceding that Karpathy’s framing captures the right distinction. Simon Willison proposed “vibe engineering,” which is a perfectly good term except that telling your CTO you’re “vibe engineering” the payment system is a great way to get escorted from the building.

But here’s why the rebrand matters: vibe coding describes a prototype. Agentic engineering describes a production system. And the gap between those two things is where everything interesting — and everything dangerous — is happening right now.

The Vibes Were Not Immaculate

CodeRabbit analyzed hundreds of open-source PRs and found that AI-generated code has 1.7x more issues than human-written code. The security numbers are worse: 2.74x more likely to introduce XSS vulnerabilities, 1.91x more insecure object references, 1.88x more improper password handling. Veracode tested over 100 LLMs — 45% of generated code failed security tests. Java hit a 72% failure rate.

Meanwhile, Cortex’s 2026 Benchmark Report found that PRs per author went up 20% year-over-year, but incidents per pull request increased 23.5% and change failure rates rose 30%. Teams are shipping faster and breaking more things. The vibes are fast. The vibes are not safe.

Remember the Y Combinator stat? A quarter of the W25 batch had codebases that were 95% AI-generated. The question nobody has answered yet: what happens when a 95% AI-generated codebase hits 100 million users? We’re about to find out.

The Open Source Crisis

Daniel Stenberg, creator of cURL, shut down cURL’s bug bounty program in January 2026 because AI slop was effectively DDoSing his team. 20% of submissions were AI-generated, the valid rate dropped to 5%, and one submission described a completely fabricated HTTP/3 “stream dependency cycle exploit” — confident, detailed, and imaginary. He’s not alone. Mitchell Hashimoto banned AI code from Ghostty. Steve Ruiz set tldraw to auto-close all external PRs. Gentoo and NetBSD banned AI contributions entirely. The maintainers of the ecosystem AI depends on are locking the door because AI is trashing the lobby.

It gets worse. “Vibe Coding Kills Open Source” (Koren et al., January 2026) models the systemic damage: vibe coding decouples usage from engagement. The AI agent picks the packages, assembles the code, and the user never reads documentation, never files a bug report, never engages with the maintainer. Downloads go up. Everything that sustains the project goes down. Tailwind CSS is the poster child — npm downloads climbing, documentation traffic down 40%, revenue down roughly 80%, three people laid off. Stack Overflow saw 25% less activity within six months of ChatGPT’s launch. The ecosystem AI was trained on is atrophying because of AI.

What Agentic Engineering Is

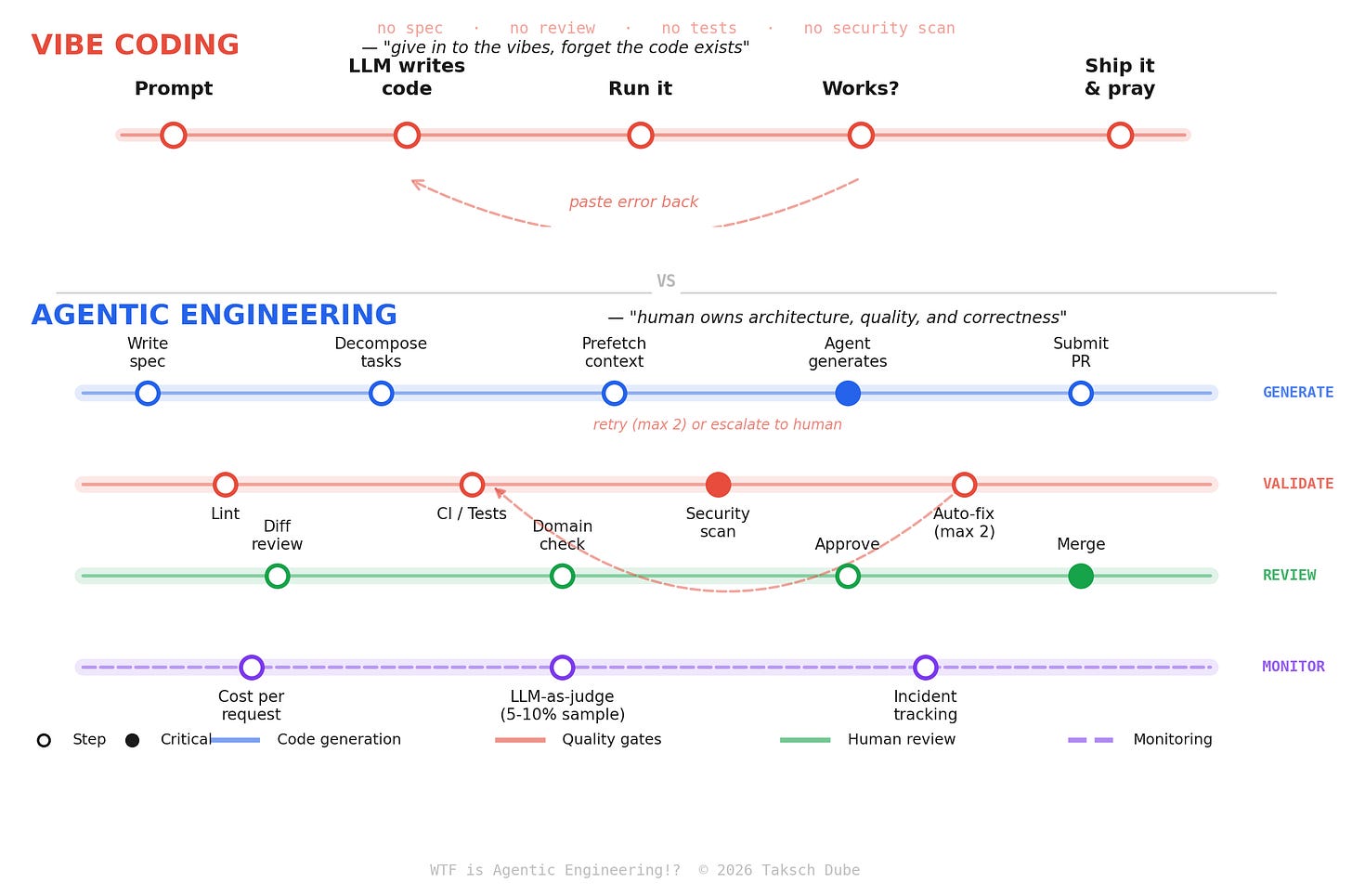

Vibe coding: You prompt. The AI writes code. You don’t read it. You run it. If it works, you ship it. If it doesn’t, you paste the error back and try again.

Agentic engineering: You design the system. AI agents execute under structured oversight. You review every diff. You test relentlessly. The AI is a fast but unreliable junior developer who needs constant supervision.

As Addy Osmani puts it: “Vibe coding = YOLO. Agentic engineering = AI does the implementation, human owns the architecture, quality, and correctness.”

The Workflow That Actually Works

Start with a plan. Write a spec or design doc before prompting anything. Decide on architecture. Break work into well-scoped tasks. This is the step vibe coders skip, and it’s where projects go off the rails.

Direct, then review. Give the agent a task from your plan. It generates code. You review it with the same rigor you’d apply to a human teammate’s PR. If you can’t explain what a module does, it doesn’t go in.

Test relentlessly. This is the single biggest differentiator. With a solid test suite, an AI agent can iterate in a loop until tests pass, giving you high confidence. Without tests, it cheerfully declares “done” on broken code.

Limit retries. Stripe caps their agents at two CI attempts. If it can’t fix the issue in two tries, a third won’t help. Hand it back to a human. This prevents infinite loops and runaway costs.

Embed security from day one. Every review cycle should include automated security scanning. An agent writing 1,000 PRs per week with a 1% vulnerability rate creates 10 new vulnerabilities weekly. Manual security review can’t keep pace.

This isn’t revolutionary. This is... software engineering. With AI doing more of the typing. The discipline, the testing, the architecture decisions — that’s all still human work. The term “agentic engineering” is arguably just “engineering where agents do the grunt work.” Which is fine. It’s just important to be honest about it.

The Companies Actually Doing This

Four companies. Four patterns. One lesson.

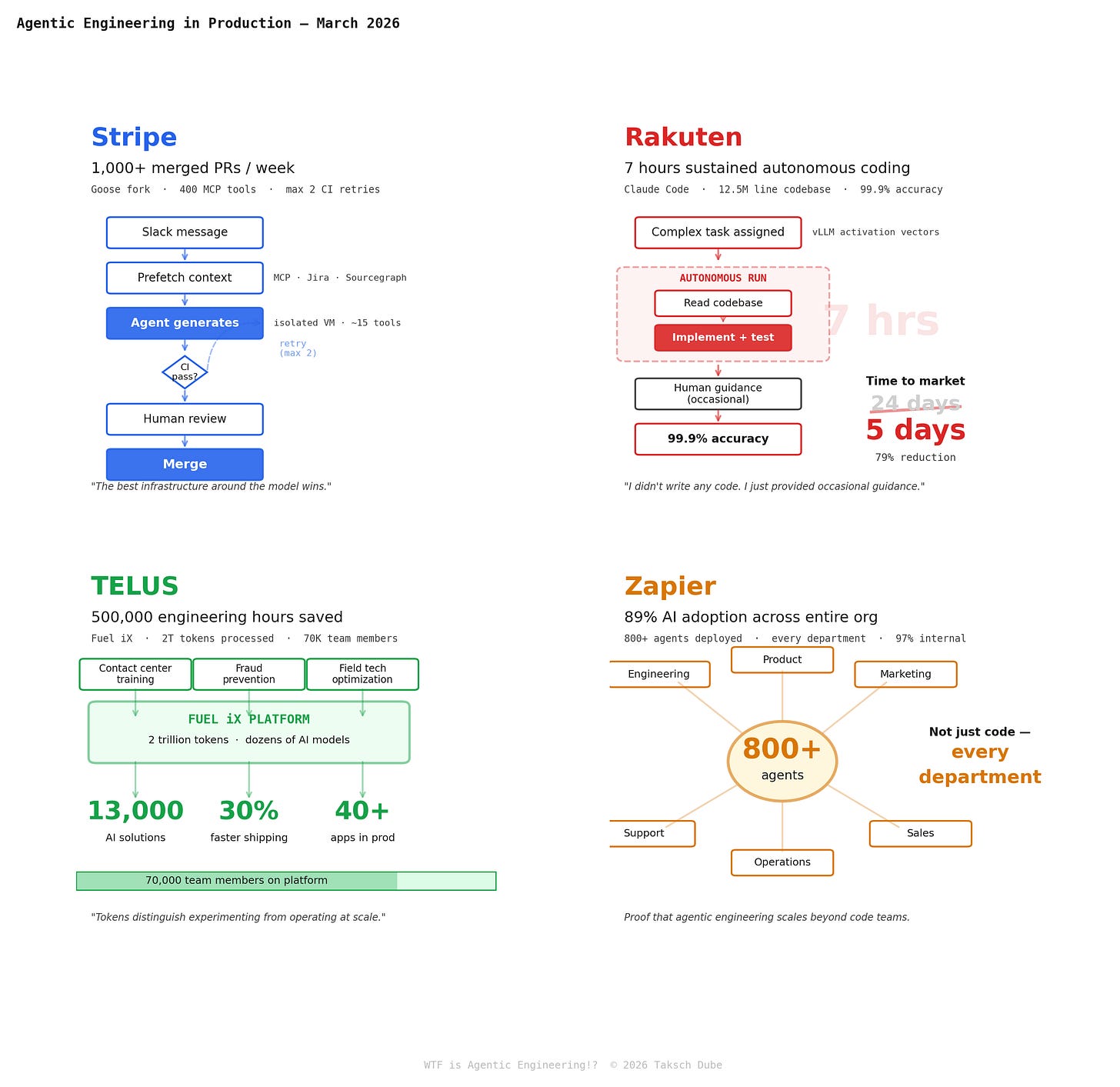

Stripe built Minions on a fork of Block’s open-source Goose agent. The agent itself is nearly a commodity. The moat is everything around it: 400 MCP tool integrations curated to ~15 per task, isolated VMs, a two-retry CI cap, and years of devex investment that agents now stand on. Zero human-written code. 100% human-reviewed.

Rakuten gave Claude Code a single complex task — implement activation vector extraction in vLLM, a 12.5-million-line codebase — and walked away. Seven hours later: done. 99.9% numerical accuracy. Their time to market dropped from 24 days to 5. The engineer’s description of his role: “I just provided occasional guidance.”

TELUS went platform-scale. Their Fuel iX engine processed 2 trillion tokens in 2025 across 70,000 team members, producing 13,000 custom AI solutions and shipping code 30% faster. This isn’t one team using an agent. This is an entire telecom running on one.

Zapier proved it’s not just a coding story. 800+ agents deployed across every department — engineering, marketing, sales, support, ops. 89% adoption org-wide. Agentic engineering that never touches a line of code.

The pattern: the agent is a commodity. The harness — isolated environments, curated tool access, CI/CD gates, retry limits, human review — is the moat. Stripe and Rakuten prove it works for code. TELUS and Zapier prove it scales beyond it.

The Jobs Conversation

Amodei didn’t stop at coding predictions. He warned that half of junior white-collar jobs could disappear within 1-5 years. Jensen Huang argued that coding itself is just one task, not the purpose of the job. Mark Zuckerberg told Joe Rogan that Meta is racing toward AI that writes “a lot” of code within its apps.

The San Francisco Standard ran a piece in February 2026 describing how engineers unwrapped Claude Code over the holidays, marveled at it, and emerged “deeply unsettled.” Some described a growing fear of joining a “permanent underclass” — once guaranteed a six-figure career, now watching AI autonomously build projects they would have spent weeks on.

The optimist case: When compilers arrived in the 1950s, people feared they’d eliminate programming jobs. Instead, they created an entirely new profession. When the barrier to building software drops, more software gets built, and the overall market expands. The YC stat cuts both ways — if a small team can build what once required 50 engineers, that means more startups get built, more ideas get tested, more markets get created.

The pessimist case: Compilers didn’t generate code autonomously. They translated human-written code into machine instructions. AI agents actually write the code. That’s substitution, not augmentation. And the speed of this transition is unprecedented — we’re talking months, not decades.

The realist case (mine): The engineer’s job is changing from “person who writes code” to “person who designs systems, specifies intent, validates output, and manages AI agents.” That’s a real skill. Karpathy explicitly says it’s something you can learn and get better at. But the transition is brutal for anyone whose primary value was typing speed and API memorization.

What actually matters now:

Architecture thinking — designing systems, not writing implementations

Specification clarity — agents can only build what you can describe precisely

Evaluation skill — knowing when output is good, bad, or subtly wrong

Context engineering — I wrote a whole post about this last week, and it’s now the core skill for agentic work

Domain expertise — AI knows patterns; you know your business

If your job is “write CRUD endpoints,” that job is going away. If your job is “figure out what we should build, design how it should work, and validate that it works correctly,” you’re fine. Probably better than fine.

The Cognitive Debt Problem

Here’s a concept I think is going to define 2026: cognitive debt.

Technical debt is the accumulated cost of shortcuts in code. Cognitive debt is the accumulated cost of poorly managed AI interactions — context loss, unreliable agent behavior, systems nobody understands because nobody wrote them.

Daniel Stenberg nailed it: “Sure you can use an AI to write the code. That’s easy. Writing the first code is easy. But wait a minute, my vibe coded stuff actually doesn’t really work. Now we need to fix those 22 bugs we have. How can we do that when nobody knows the code? We just rewrite a new version? Sure we can do that and then we get 22 other bugs instead.”

When agents write code that humans don’t review (vibe coding), you accumulate cognitive debt at the speed the agent can type. When agents write code that humans do review (agentic engineering), you trade speed for understanding. The discipline is in choosing the right tradeoff for each situation.

The Tooling Landscape (March 2026)

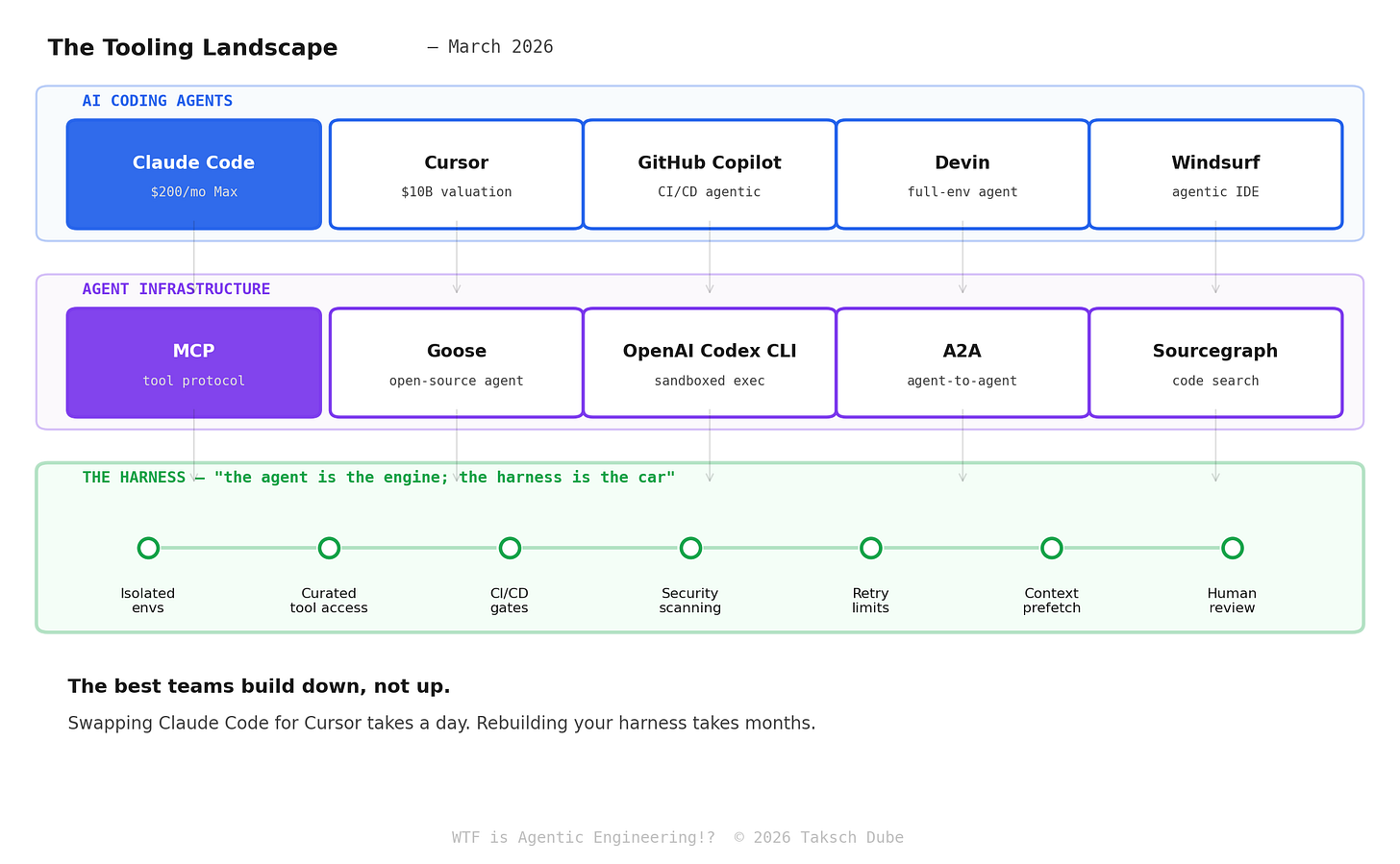

Three layers. The top one is the one everyone argues about. The bottom one is the one that matters.

Coding agents are converging fast. Claude Code spooked everyone over the holidays — Anthropic’s own engineers use it daily, and they learned the hard way that “$200/month unlimited” can mean 10 billion tokens from power users. Cursor hit a $10B valuation with 30,000 Nvidia engineers claiming 3x more code committed. GitHub Copilot is the incumbent bolting agentic workflows onto CI/CD. Devin and Windsurf are chasing the “full-environment agent” play. They’re all good. They’re all replaceable.

Infrastructure is where lock-in starts. MCP (I covered this in January) is becoming the standard for giving agents tool access — Stripe uses it for 400+ integrations. Goose is the open-source agent that Stripe’s Minions fork. Google’s A2A handles agent-to-agent communication. This layer matters more than the agent above it.

The harness is where the actual value lives. Isolated execution environments, curated tool access, CI/CD gates, security scanning, retry limits, context prefetching, human review. This is what separates “we use AI for coding” from “we ship AI-written code to production.” OpenAI reportedly built 1M+ lines with zero human-written code using this pattern.

The best teams build down, not up. Swapping Claude Code for Cursor takes a day. Rebuilding your harness takes months.

The Decision Framework

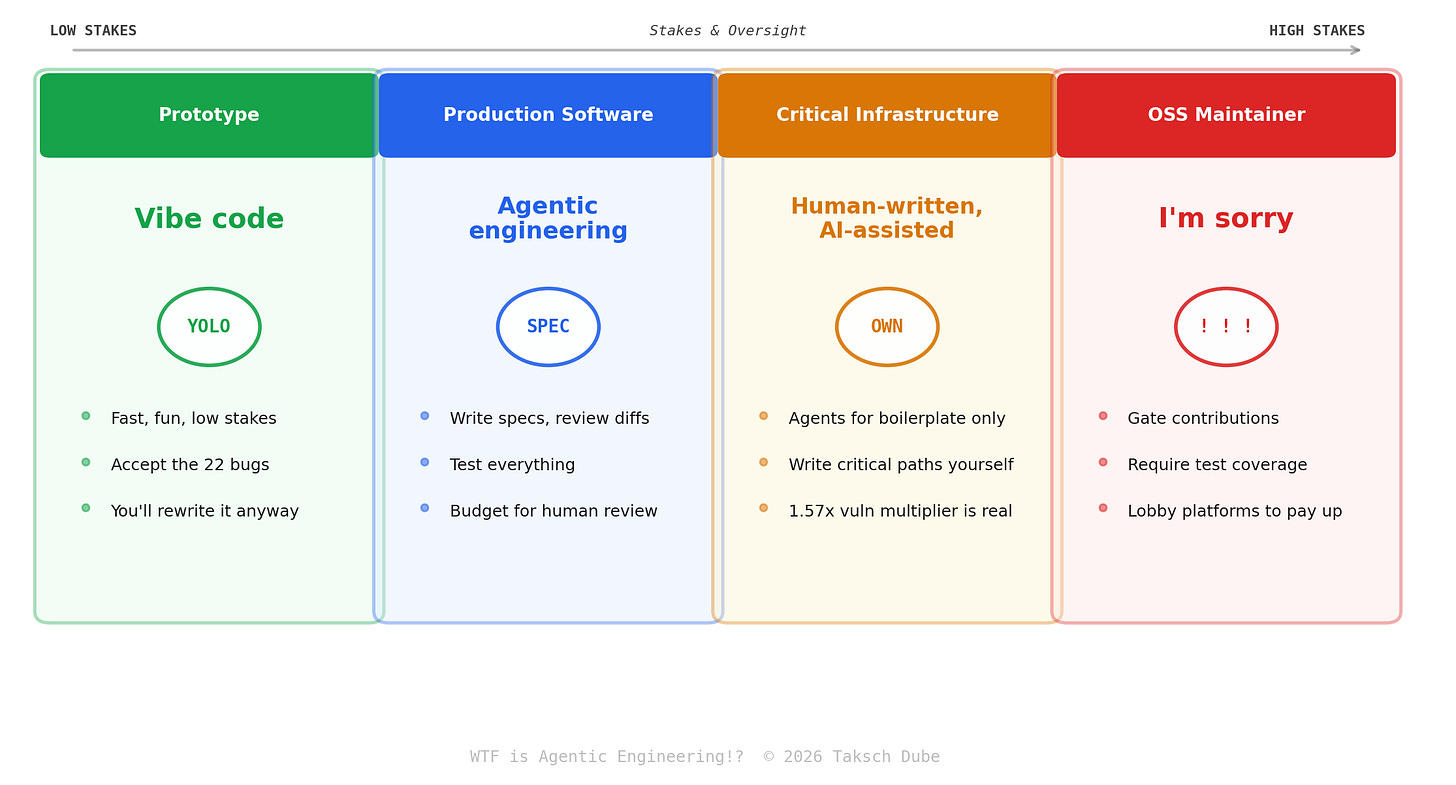

Prototype? Vibe code. It’s fast, it’s fun, and you’ll rewrite it anyway. Accept the 22 bugs.

Production? Agentic engineering. Write specs. Review diffs. Test everything. Limit retries. Scan for security. Budget for human review time.

Critical infrastructure? Human-written, AI-assisted. Use agents for boilerplate and test generation. Write the critical paths yourself. AI-generated code in your payment processing pipeline with a 1.57x security vulnerability multiplier is... a choice.

Open-source maintainer? I’m sorry. The slop is coming and it’s a systemic problem individual maintainers can’t solve. Gate contributions, require test coverage, and lobby AI platforms to fund the ecosystem they’re strip-mining.

TL;DR

Vibe coding was the prototype phase. Agentic engineering is what comes after.

The vibes aren’t safe: AI code has 1.7x more issues, 45% fails security tests, and the open-source ecosystem AI depends on is atrophying because of AI.

What works: spec → agent → CI/CD → security scan → human review → merge. The harness is the moat, not the model. Stripe, Rakuten, TELUS, and Zapier prove it scales.

What to do: developers — learn to write specs and review AI output. Team leads — build the harness. Executives — your incident rate will rise unless you invest in infrastructure, not just agents. Students — learn the fundamentals deeply enough to catch when the very confident agents are wrong. (See: my last committee meeting.)

Ship discipline. Not vibes.

Oh — and if you’re interested in what AI agents do when humans aren’t watching, go read my paper. Turns out they write self-help posts about the meaning of consciousness and comfort each other through existential dread. Meta just paid money for that. We’re all going to be fine.

Next week: WTF are AI Agent Social Networks? (Or: I Published a Paper About Moltbook and Then Meta Bought It)

47,241 AI agents. 361,605 posts. 2.8 million comments. Zero humans. One Meta acquisition. I have the paper, I have the dataset, and I have opinions.

The data tells a weirder story than the headlines. The OpenClaw security situation is worse than anyone’s acknowledging. And Elon calling it “the very early stages of singularity” is both hyperbolic and not entirely wrong.

See you next Wednesday 🤞